AIGENCY V3: Introducing Our Next-Generation Independent AI Engine

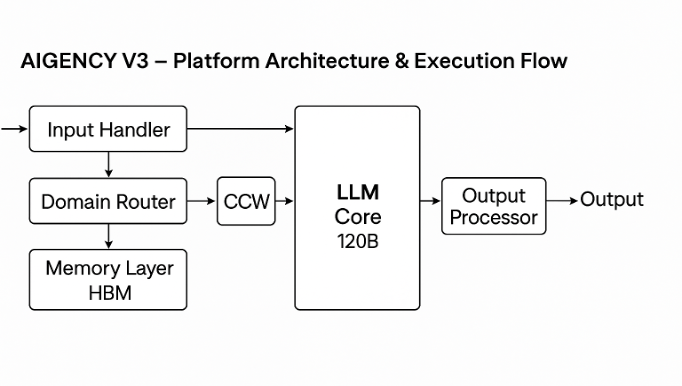

Our Turkey-based artificial intelligence research team has released the third-generation large language model under the name AIGENCY V3. This 120-billion-parameter core breaks entirely from traditional architectures based on external models, offering a local, auditable, and regulation-compliant infrastructure.

Why is “V3” a Milestone?

- Zero External Dependency

- LLAMA 3-based layers from previous versions were removed; all parameters were restructured by AIGENCY engineers. As a result, all third-party risks in data, code, and update flows have been eliminated.

- 278K Token Context Window

- The new Contextual Core-Wrapping (CCW) architecture in V3 enables processing of long documents in a single pass. Reviewing contracts or working with hundreds of thousands of words in project archives is now a real-time capability.

- Parametric Efficiency

- With proprietary compression techniques, the 22 GB parameter file has been reduced to just 6 GB. The full model can run on a local server with only 43 GB of GPU memory—cutting total cost of ownership by approximately 35% compared to global competitors.

- Security and Privacy

- Memory layers are encrypted with AES-256-XTS and ChaCha20-Poly1305. Each deletion request is cryptographically timestamped via blockchain, ensuring regulatory audit-readiness.

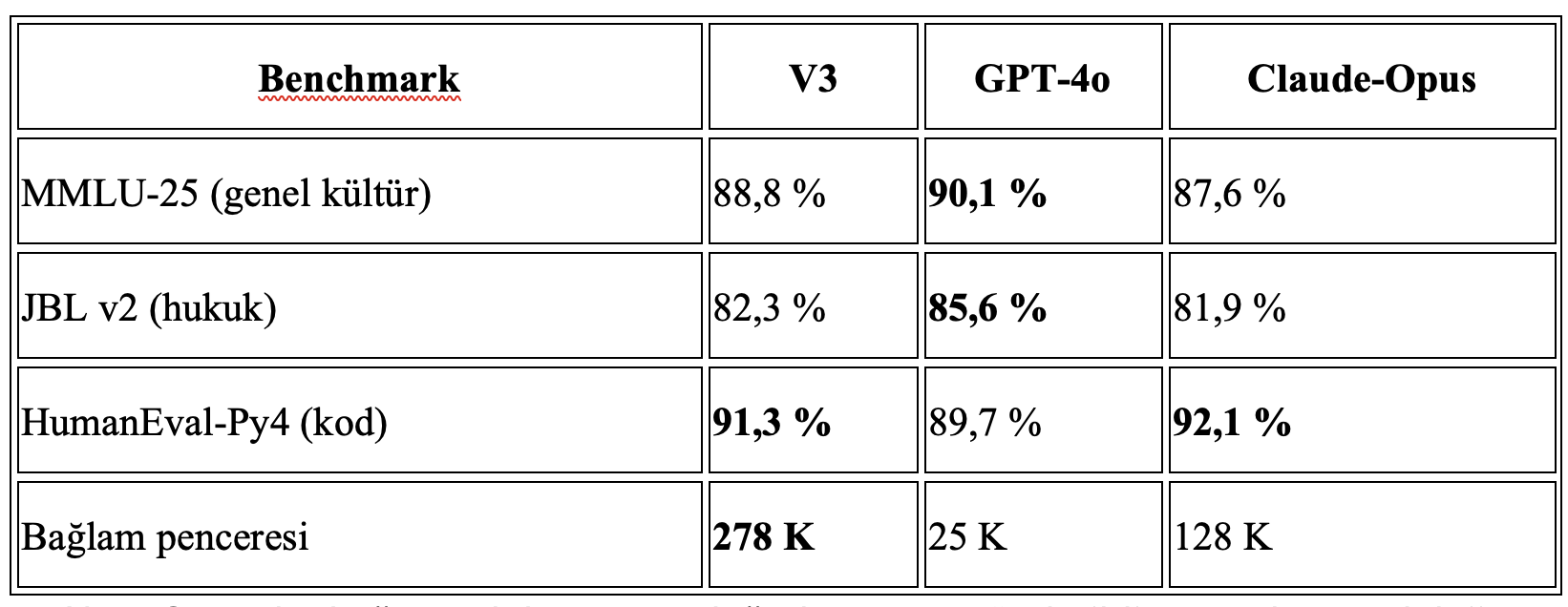

Note – Results have been submitted for independent lab validation. Full benchmarking methodology is included in the appendix of the technical paper.

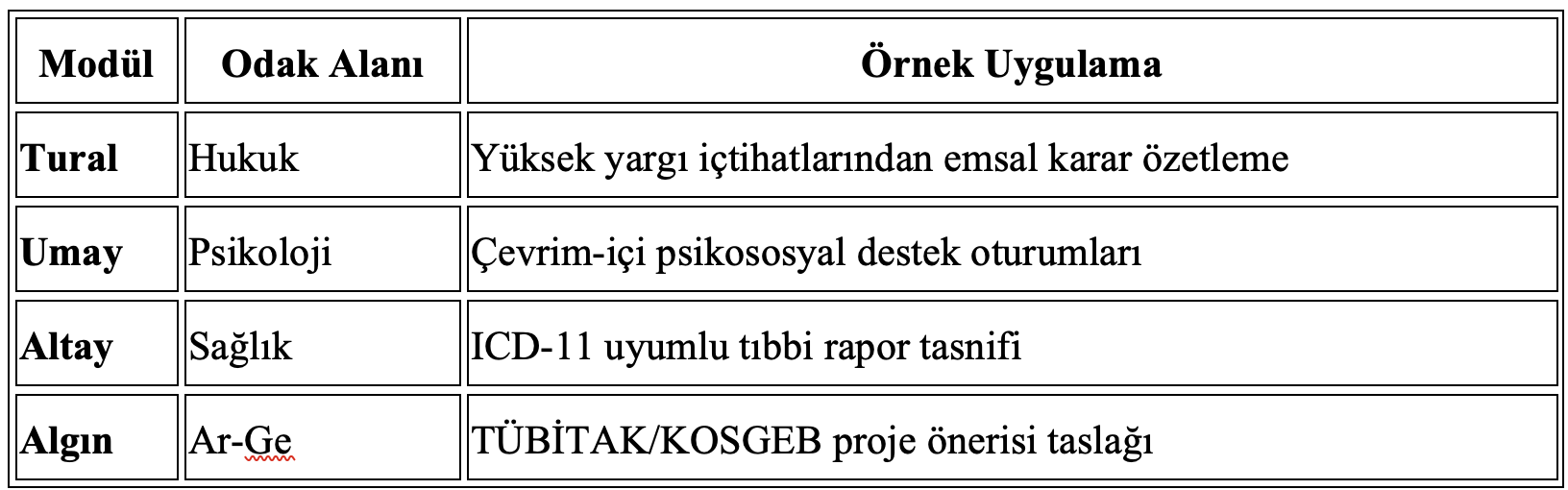

Where Is It Used?

Each module is integrated via low-rank (LoRA) overlays without altering the core model. This ensures both security and contextual isolation are maintained.

Download the Whitepaper

A 30-page technical whitepaper covering the full AIGENCY V3 architecture, training strategy, benchmark methodology, and roadmap is now available: aigency-v3-white-paper-v1-2025

SHA-256 Hash: 4f624b23981eedc4a3a04ec38d734d5c02539da57f1aa1377eadfb97650c0096

(If integrity mismatch occurs, please contact us via [email protected].)

What’s Next?

- 2025/Q3: Prototype for Delta-coder-based next-gen compression

- 2025/Q4: Launch of post-quantum Kyber testnet

- 2026: Hierarchical Mixture-of-Experts production release, model cards signed with Falcon-1024

Community feedback—especially from research teams reproducing the model under academic licensing—is highly valuable to us. We look forward to your insights and contributions.

AIGENCY

“Smart Solutions for Real People”